40 Million People Ask ChatGPT a Health Question Every Day. HIPAA Doesn't Cover Any of It.

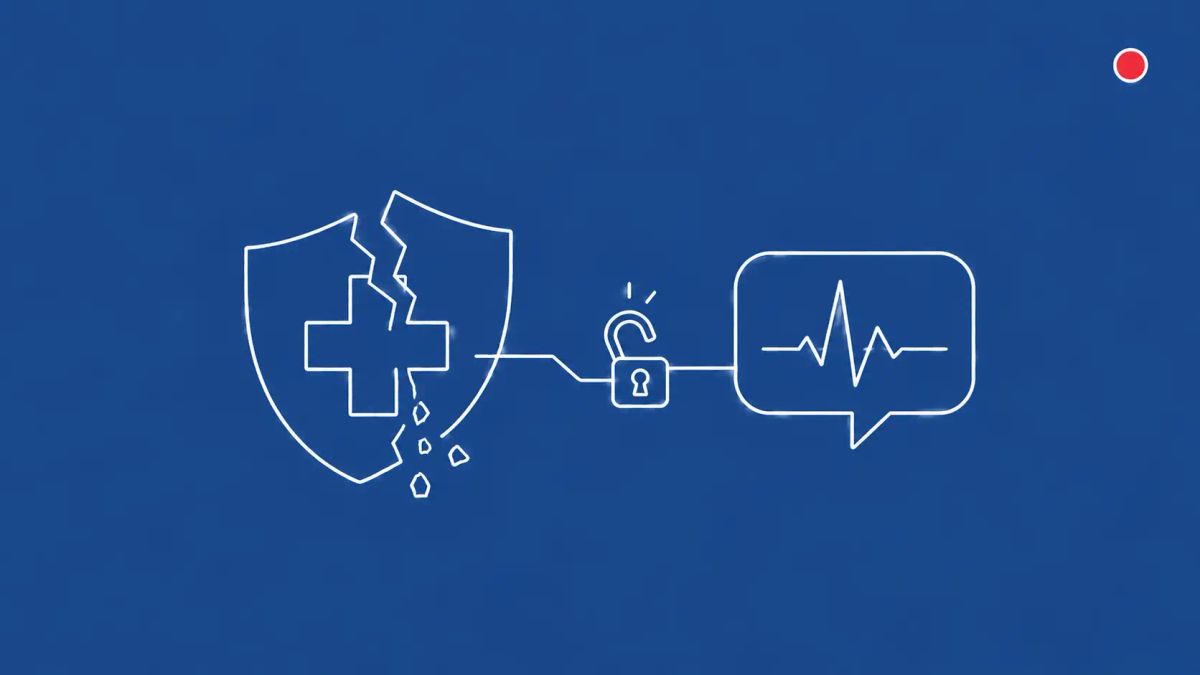

More than 40 million people ask ChatGPT a healthcare-related question every day. OpenAI's ChatGPT Health and Anthropic's Claude for Healthcare are not HIPAA-compliant for consumers — and that is by design.

The vendors say their consumer products "support HIPAA" or are "HIPAA-ready." Neither claims compliance. The distinction is doing all the legal work.

WASHINGTON, D.C. — More than 40 million people ask ChatGPT a healthcare-related question every day, representing more than five percent of all global ChatGPT messages, according to OpenAI. Yet OpenAI's consumer ChatGPT Health and Anthropic's Claude for Healthcare are almost certainly not regulated as HIPAA covered entities, according to legal experts at the Center for Democracy and Technology and the Future of Privacy Forum reporting in CyberScoop. The result, as CDT senior counsel Andrew Crawford framed it: a large and growing number of companies not bound by HIPAA privacy protections are collecting, sharing, and using sensitive health data — under privacy regimes governed by Terms of Service rather than federal law.

The vendor positioning is deliberate. OpenAI's enterprise-grade ChatGPT for Healthcare product "supports HIPAA compliance" and OpenAI will sign Business Associate Agreements with hospital customers. Anthropic's Claude for Healthcare is "built on HIPAA-ready infrastructure" and offers BAAs to healthcare enterprise customers via the Claude API. But the consumer-facing ChatGPT Health and Claude Pro and Max products that hundreds of millions of people use are not covered by BAAs and are not regulated by HIPAA. As Vanderbilt biomedical informatics professor Bradley Malin put it to TIME, when a consumer provides data directly to a technology company that is not providing health care services, it becomes a contractual agreement between the individual and the company. The same legal gap covers Anthropic's AI platform privacy work in the broader 2026 AI vendor security landscape.

| Who is affected | |

|---|---|

| Healthcare CISOs and compliance officers Patient and staff use of consumer AI tools creates novel HIPAA exposure | Health plan and benefits administrators Employee wellness apps and EAP integrations may flow through AI vendors |

| Privacy and consumer protection teams State AGs and FTC are negotiating the regulatory gap in real time | Individual consumers Health data shared with consumer AI is governed by TOS, not HIPAA |

The products, the launches, the scale

OpenAI announced ChatGPT Health on January 7, 2026, as a dedicated section within the ChatGPT user interface for uploading medical records, connecting Apple Health and Function diagnostic data, and asking health-specific questions. OpenAI partnered with b.well, an AI-powered digital health platform, to enable medical records connection for U.S. patients. Anthropic followed within days, announcing Claude for Healthcare on January 11, 2026, with parallel consumer integration through Claude Pro and Max subscriptions that lets users grant Claude secure access to medical records, fitness data, and lab results. Both products explicitly emphasize that user health data is not used to train models and that users can disconnect or delete data.

The scale matters. OpenAI's 40 million daily healthcare questions represent a structural shift from pre-AI health search behavior. Studies have found large language models proficient at medical diagnostics, with one academic paper calling capabilities superhuman compared to a human doctor in specific narrow tasks. The use case is real; the question is what privacy regime governs the data flow.

The HIPAA gap, explained

HIPAA, passed in 1996, regulates health plans, healthcare clearinghouses, healthcare providers, and the business associates who handle Electronic Protected Health Information on their behalf. It was designed to enable the digitization of medical records while protecting them inside that regulated ecosystem. It was not designed to regulate technology companies that consumers voluntarily share health data with outside the regulated ecosystem. As Future of Privacy Forum's Brittany Geoghegan framed it, HIPAA was meant to help digitize records, not stop tech companies from gathering health data outside the doctor's office.

The practical consequence is two parallel data flows for the same patient health information. A patient who shares blood pressure readings with their cardiologist's electronic health record has HIPAA protections, OCR enforcement, and a BAA chain governing every downstream processor. The same patient sharing the same readings with ChatGPT Health has Terms of Service protections, FTC Section 5 jurisdiction over deceptive practices, and a company that can update its privacy policy at any time. Center for Democracy and Technology's Andrew Crawford emphasized to CyberScoop that policy gaps put sensitive health information in real danger because each company sets its own rules.

What the vendors do and do not claim

OpenAI says ChatGPT Health uses added encryption and isolation features to compartmentalize health conversations, multifactor authentication, encryption at rest and in transit, a 30-day chat deletion feature, and a commitment that data is not used to train foundation models. Health conversations are separated from regular ChatGPT chat history. The product is positioned as designed to support health care, not replace it, and not intended for diagnosis or treatment. OpenAI says it consulted with more than 260 physicians across dozens of specialties during a two-year development cycle.

Anthropic emphasizes that health data shared with Claude is excluded from the model's memory, not used to train future systems, and accessible only with explicit user opt-in that can be rescinded. The enterprise Claude for Healthcare product is built on what Anthropic calls HIPAA-ready infrastructure with BAA support for hospitals and health systems. The carefully chosen terminology, HIPAA-ready and supports HIPAA, signals both companies' awareness that they cannot claim full compliance for consumer products.

What this means downstream for healthcare CISOs

The question for hospital CISOs and compliance officers is not whether to permit ChatGPT Health and Claude for Healthcare use, but how to govern the inevitable data flows. Clinicians are already using consumer AI tools for differential diagnoses, drug interaction checks, and treatment summaries — sometimes with patient identifiers. Patients are arriving at appointments having pre-consulted AI chatbots. Employees in health plans are uploading wellness data to consumer apps that connect to corporate benefits programs. None of these flows are HIPAA-covered, but all create downstream questions about what the organization knew and when about employee or contractor PHI handling practices.

The CyberSignal Analysis

Signal 01. Vendor positioning language matters legally and operationally

HIPAA-ready and supports HIPAA compliance are not the same as HIPAA-compliant. The carefully chosen language reflects deliberate legal positioning by both OpenAI and Anthropic. For healthcare CISOs reviewing AI vendor procurement, the operational rule is simple: a BAA must be in place and signed before any patient data flows to the vendor. Marketing copy is not a compliance posture. The enterprise products from both vendors are designed to enable BAAs; the consumer products are not.

Signal 02. The regulatory gap will be filled by state-level action first

HHS Office for Civil Rights has authority under HIPAA only over covered entities and business associates. FTC has authority under Section 5 over unfair or deceptive practices but cannot create new privacy regulations without congressional action. State attorneys general and state-level health privacy laws (California's CMIA, Washington's My Health My Data Act, New York's privacy statutes) will fill the gap first. CISOs operating in regulated industries should track state-level enforcement as the leading indicator of where the federal regime will eventually arrive.

Signal 03. The voluntary-compliance pattern will repeat across regulated sectors

The AI healthcare HIPAA gap is the first visible instance of a broader pattern. AI vendors are positioning consumer products with voluntary compliance-support language (HIPAA-ready, FERPA-friendly, GLBA-aligned) rather than seeking formal regulatory coverage. The same pattern will appear in finance (GLBA, BSA), education (FERPA), and government (FedRAMP, FISMA) as AI vendors expand into regulated sectors. CISOs should treat AI vendor compliance claims as marketing statements requiring procurement-level verification, not regulatory status.

What to do this week

- Update your BAA review process to explicitly address generative AI services. Document organizational position on whether ChatGPT, Claude, or similar tools should process patient data, employee health information, or wellness program data.

- Audit clinician and staff usage of consumer AI tools for patient-care decisions. Many physicians use consumer ChatGPT for differential diagnoses or drug interaction checks; pre-script governance and brief staff on what is and is not permitted.

- Pre-script an incident response playbook for patient data accidentally shared with consumer AI tools. Breach disclosure timelines start at awareness; verify your IR team knows in advance how to characterize and report this category of incident.

- Brief patient communications and patient education teams. Patients consulting AI tools about their health do not have the same legal protections as patients consulting your providers; updated patient education materials are becoming necessary.