Google Just Caught the First AI-Generated Zero-Day in the Wild. A Hallucinated CVSS Score Gave It Away.

Google Threat Intelligence Group disclosed the first publicly confirmed AI-generated zero-day exploit caught in the wild on May 11. The Python script bypassed 2FA on a popular open-source admin tool and was destined for mass exploitation before Google disrupted it.

The RSAC 2026 industry consensus said this was coming. Five weeks later, Google has documented it. A prominent cybercrime group used an AI model to develop a working zero-day exploit, planned to use it for mass exploitation, and was caught before the campaign launched.

MOUNTAIN VIEW, CA — Google Threat Intelligence Group (GTIG) published a landmark report on May 11, 2026 documenting the first publicly confirmed AI-generated zero-day exploit caught in the wild. The exploit, implemented as a Python script, was developed by a prominent cybercrime threat actor planning a mass vulnerability exploitation operation targeting an unnamed open-source, web-based system administration tool. The zero-day enabled two-factor authentication (2FA) bypass once attackers had valid user credentials. GTIG worked with the impacted vendor to responsibly disclose the vulnerability and disrupt the threat activity before the planned mass exploitation campaign could be executed.

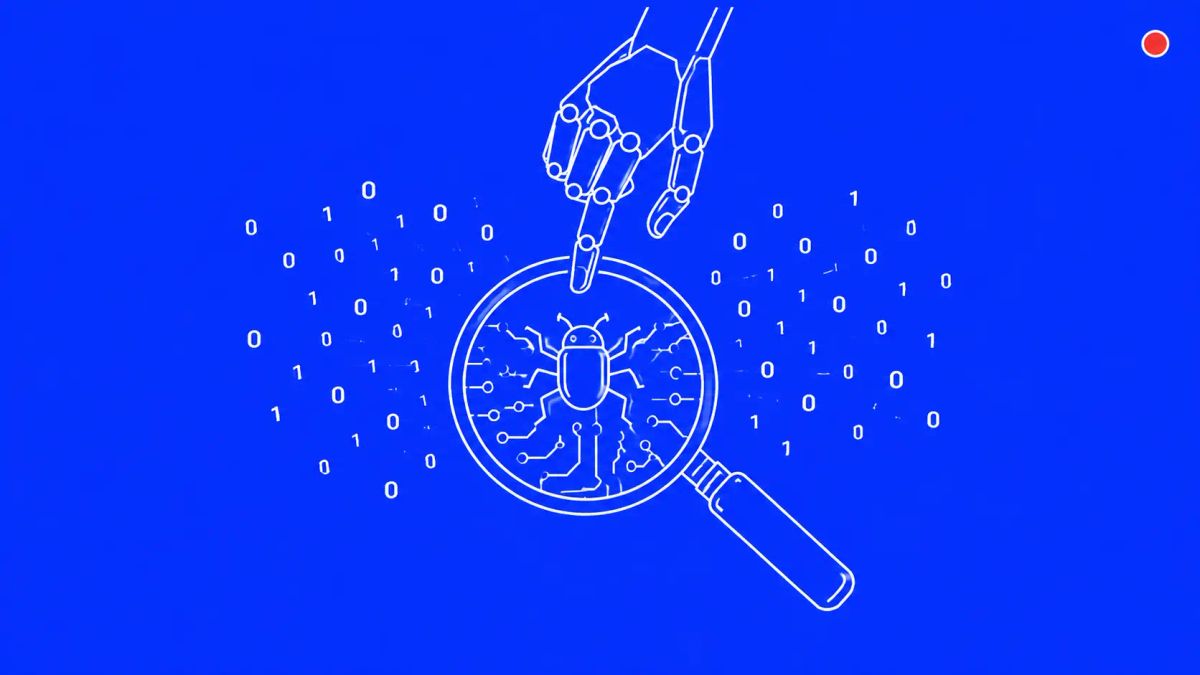

GTIG identified the AI provenance through telltale signatures in the code: a hallucinated CVSS severity score, textbook Python formatting, detailed help menus, and educational docstrings characteristic of large language model output. GTIG explicitly stated Google's Gemini was not involved. The root vulnerability stemmed from a high-level semantic logic flaw where the developer hardcoded a trust assumption — a class of bug increasingly within reach of LLM reasoning capabilities. This is the operational validation of the RSAC 2026 industry consensus on AI-driven cyber acceleration that Mandia, Stamos, and Adamski warned about — only five weeks later.

What GTIG found and how it caught the AI provenance

The exploit was a Python script that bypassed 2FA on a popular open-source, web-based system administration tool after attackers obtained valid user credentials. GTIG's analysis surfaced multiple structural signatures that, in combination, gave the team high confidence the script was developed with LLM assistance. The hallucinated CVSS severity score is the most editorially distinctive of these — LLMs frequently fabricate plausible-looking numerical metadata when asked to characterize their output, and a human exploit developer building a zero-day for criminal use has no reason to include a CVSS score at all. Textbook Python formatting, detailed help menus consistent with developer-tool conventions, and educational docstrings characteristic of training data round out the indicators.

John Hultquist, Chief Analyst at GTIG, framed the operational implication in stark terms in his comments to Help Net Security: cybercriminals use zero-days frequently in fast mass exploitation events; because they have to alter their targets for extortion, using zero-days for a prolonged period is harder, and rapid deployment is their best option. The exploit Google disrupted was designed for exactly that — speed at scale. Errors in the AI-generated code likely interfered with successful use in this case, but the underlying capability is now documented.

The bug class matters more than the bug

The 2FA bypass exploited a semantic logic flaw — specifically, a developer who hardcoded a trust assumption that contradicted the application's authentication enforcement logic. This is meaningfully different from the bug classes that have dominated mass exploitation in recent years. Memory corruption, improper input sanitization, and SQL injection are pattern-matchable by traditional scanners. Hardcoded trust assumptions are not. They require understanding the developer's intent, identifying the contradiction between what the code does and what the application's security model assumes, and constructing an exploit that targets the semantic gap.

GTIG's framing in the report is that frontier LLMs still struggle to navigate complex enterprise authorization logic but have an increasing ability to perform contextual reasoning, effectively reading the developer's intent to correlate the 2FA enforcement logic with the underlying trust assumption. The implication for defenders is significant: bug classes that traditional static analysis cannot detect are now within reach of AI-assisted vulnerability discovery, and the exploitation timeline for those bugs is collapsing accordingly.

The broader 2026 AI-as-attack-surface report

The 2FA exploit is the headline finding, but GTIG's report documents a maturing transition from nascent AI-enabled operations to the industrial-scale application of generative models in adversarial workflows. Russia-nexus actors deployed two malware families — CANFAIL and LONGSTREAM — with AI-generated decoy code to obscure malicious functionality, with CANFAIL containing LLM-authored comments explicitly describing blocks of code as "unused filler." North Korean APT45 is using AI to churn through thousands of exploit checks and bulk out its toolkit. China-nexus operators are using the Hexstrike and Strix frameworks alongside the Graphiti memory system to autonomously probe a Japanese technology firm and an East Asian cybersecurity platform.

Google also disclosed observing UNC2814, a Chinese cyberespionage group, attempting to bypass Gemini guardrails with prompts directing the model to act as a security expert specialized in embedded devices — persona-driven jailbreak prompting aimed at analyzing TP-Link firmware and Odette File Transfer Protocol implementations for vulnerabilities. Google has been disabling malicious accounts abusing Gemini and is pushing AI defender tools including the Big Sleep vulnerability discovery agent and CodeMender patching tool into wider use.

Why this matters for the velocity gap CISOs were warned about

Five weeks ago at RSAC 2026, Kevin Mandia, Alex Stamos, and Morgan Adamski independently converged on the same operational claim: AI is collapsing the time between vulnerability discovery and weaponization to a tempo defenders cannot match with current patch cycles, procurement timelines, or compliance frameworks. Mandia's specific warning was operationally concrete: organizations would not have time to call Mandiant on a Thursday afternoon, get people in, sign a contract.

The Google disclosure is the first publicly documented instance of that warning being operationally validated. A prominent cybercrime group used an AI model to discover and weaponize a zero-day in a widely deployed open-source tool, with mass exploitation as the operational objective. The timeline from AI-assisted discovery to mass exploitation is the question that matters for every CISO this week.

The CyberSignal Analysis

Signal 01 — The weeks-to-months exploitation window is collapsing

Traditional patch SLAs calibrated to 30, 60, or 90 days are now structurally misaligned with the threat tempo this disclosure documents. The right reframing is not faster patching of the existing SLA. It is treating AI-discovery and AI-exploitation as a new vulnerability class that requires pre-authorized emergency patching, automated rollback for critical paths, and pre-built IR retainer relationships that do not depend on procurement cycles. Boards should expect this conversation from their CISOs in the next 30 days; CISOs who have not initiated it should do so this week.

Signal 02 — Semantic logic flaws are now an AI-discoverable bug class

The 2FA bypass GTIG documented exploited a hardcoded trust assumption — a class of bug that traditional static analysis cannot reliably detect. AppSec programs that rely primarily on SAST, DAST, and dependency scanning will miss this class. The defensible response is to augment the existing scanner stack with LLM-assisted security review for authentication and authorization code paths specifically, and to pen-test against semantic logic flaws in the most authentication-sensitive parts of the codebase.

Signal 03 — AI output signatures are a new triage dimension for IR

Hallucinated CVE IDs, fabricated CVSS scores, textbook code formatting that no human exploit developer would include, and educational docstrings characteristic of LLM training data are now operationally useful indicators when triaging attack tooling found in an environment. Update YARA rules and detection patterns to flag these characteristics. Add "was this AI-developed?" as a question in your IR playbook for new attack tooling discovered post-incident.

What to do this week

- Audit your patch SLAs against an "hours-to-days" exploitation window assumption for high-severity CVEs in widely-deployed open-source tooling. Where the existing SLA cannot meet that bar, document the gap, identify the compensating controls, and brief executive leadership on the residual risk.

- Tabletop the "AI-discovered zero-day in your stack" scenario this month. Use cPanel, Jenkins, Kubernetes, Apache, or any other widely-deployed tool relevant to your environment as the hypothetical. Pre-script the workflow now — from initial advisory through emergency change-control, patch validation, and post-incident review.

- For AppSec teams: inventory your authentication and authorization code paths. Identify where trust assumptions get hardcoded and where exception-handling shortcuts have been left in production. These are the patterns AI-assisted vulnerability discovery is now reliably finding.

- Update your threat intelligence cadence. GTIG, CISA KEV, and sector ISAC advisories warrant near-real-time monitoring during high-disclosure events. Weekly digest cadence is no longer adequate for organizations with significant open-source exposure.