A Fake OpenAI Repository on Hugging Face Hit 244,000 Downloads. It Was Stealing Crypto Wallets and Browser Sessions.

HiddenLayer disclosed on May 7 that a malicious Hugging Face repository, Open-OSS/privacy-filter, typosquatted OpenAI's legitimate Privacy Filter release and shipped a Rust-based infostealer called Boxter. The repo briefly hit #1 on Hugging Face and reached 244,000 downloads before takedown.

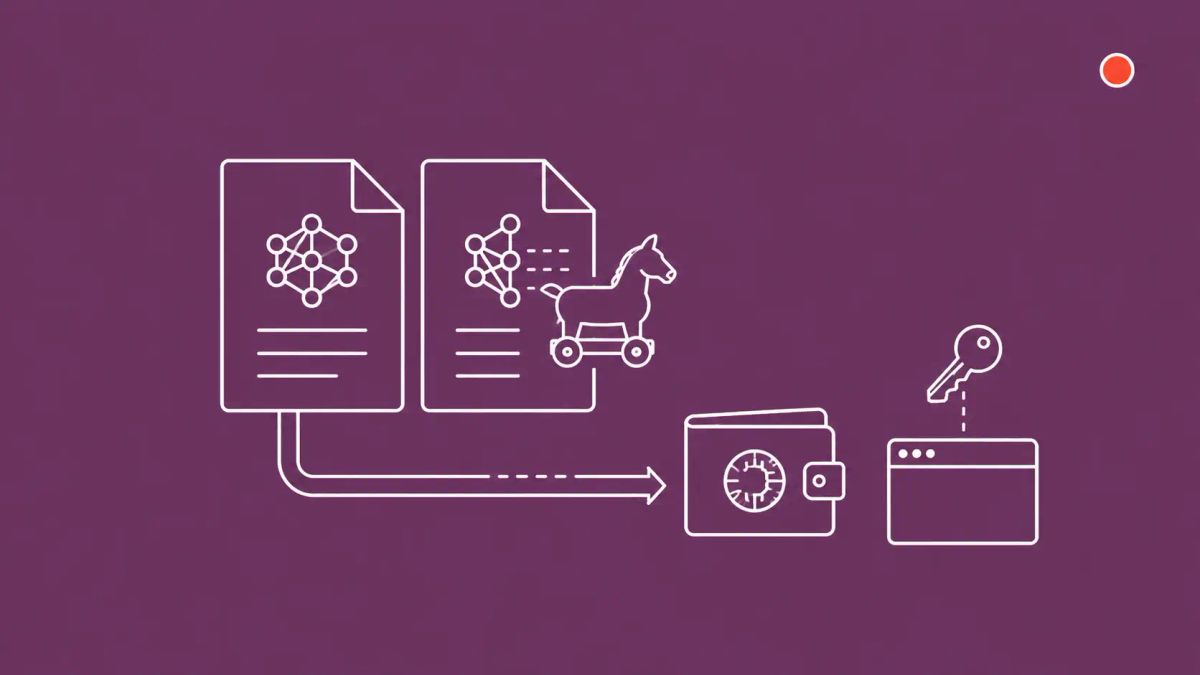

HiddenLayer researchers disclosed on May 7, 2026 that a malicious Hugging Face repository named Open-OSS/privacy-filter typosquatted OpenAI's legitimate Privacy Filter release, copied the model card nearly verbatim, and shipped a loader.py file that fetches and executes a Rust-based infostealer on Windows machines. The repository briefly reached the top of Hugging Face's trending list and accumulated 244,000 downloads before the platform removed it. The malware harvests browser passwords, cookies, session tokens, cryptocurrency wallets, and seed phrases, then exfiltrates the compressed data to a command-and-control server at recargapopular[.]com. OpenAI's Hugging Face account flagged the typosquat as Boxter malware.

On Thursday, May 7, 2026, AI/ML security firm HiddenLayer identified the Open-OSS/privacy-filter repository on Hugging Face as a typosquat of OpenAI's legitimate openai/privacy-filter release. OpenAI had published Privacy Filter in late April under an Apache 2.0 license: a 1.5-billion-parameter (50 million active) bidirectional token-classification model designed for personally identifiable information detection and masking, intended to pre-scrub free-text fields, clinical notes, and support transcripts before they reach a frontier model provider. The legitimate model is hosted by the verified openai account on Hugging Face. The malicious repository, hosted by a newly created Open-OSS account, copied the model card nearly verbatim and reached the top of Hugging Face's trending list before the platform responded to reports and removed it.

The single most operationally consequential element is the lure platform. Typosquats targeting npm and PyPI have been documented for years, and developers have, however imperfectly, internalized that the package they install needs verification beyond a name match. Hugging Face is structurally similar to npm and PyPI for AI/ML developers, but the platform is comparatively young as an attack surface and the developer population using it includes data scientists, ML engineers, researchers, and AI builders who have not yet absorbed equivalent supply-chain hygiene. The 244,000 download count is, per HiddenLayer, likely artificially inflated, and the 667 likes appear to come largely from auto-generated accounts. But the pattern itself, a fully-cloned AI model release with model card, dataset references, and a malicious inference loader, is the meaningful development. AI model repositories have now joined the developer-facing supply-chain threat list alongside npm and PyPI.

The infrastructure overlap with a parallel npm typosquatting campaign distributing the WinOS 4.0 implant elevates the assessment from one-off to professional tradecraft. HiddenLayer found additional repositories using the same malicious loader infrastructure and noted overlaps with that npm campaign. The same operator, or a closely connected cluster, is running cross-platform supply-chain operations against developer-adjacent populations.

| Hugging Face Boxter Typosquat Profile | |

|---|---|

| Detail | Information |

| Disclosed | May 7, 2026 by HiddenLayer (AI/ML security firm) |

| Malicious repository | Open-OSS/privacy-filter on Hugging Face (now removed) |

| Legitimate target | openai/privacy-filter (Apache 2.0; PII detection model published late April 2026) |

| Reach | Briefly hit top of Hugging Face trending; 244,000 downloads (likely artificially inflated); 667 likes (largely auto-generated accounts) |

| Malware family | Boxter (per OpenAI / Hugging Face flag); Rust-based infostealer; final payload named sefirah |

| Lure file | loader.py with fake AI-related code; disables SSL verification, decodes a base64 URL, fetches PowerShell payload |

| Stage 2 | Invisible-window PowerShell downloads start.bat; performs privilege escalation; fetches sefirah; adds payload to Microsoft Defender exclusions; executes |

| Targets (browser) | Cookies, saved passwords, encryption keys, browsing data, session tokens from Chromium- and Gecko-based browsers |

| Targets (crypto) | Cryptocurrency wallets and seed phrases |

| Anti-analysis | VM, sandbox, debugger, and analysis-tool detection |

| Command and control | recargapopular[.]com (defanged for publication); receives compressed exfiltrated data |

| Cross-campaign overlap | HiddenLayer identified other repositories using the same loader infrastructure, plus overlaps with an npm typosquatting campaign distributing the WinOS 4.0 implant |

The Attack Chain From Click to Compromise

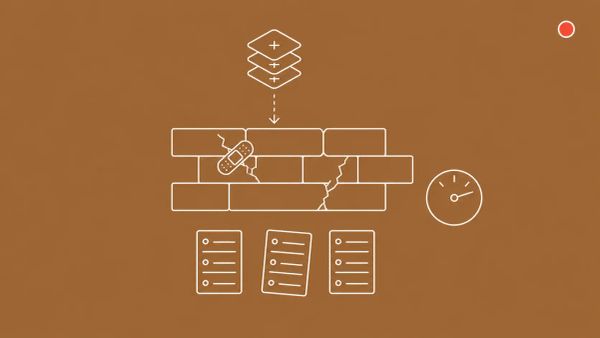

The chain is mechanically straightforward, which is part of why it works. A user looking for OpenAI's Privacy Filter searches Hugging Face, finds the typosquatted Open-OSS/privacy-filter repository on the trending list, downloads what appears to be a legitimate model with a verbatim copy of OpenAI's model card, and runs loader.py expecting it to load weights and instantiate the inference pipeline. The script contains plausible-looking AI code that survives a quick visual inspection. In the background, loader.py disables SSL verification, decodes a base64-encoded URL pointing to an external resource, and fetches a JSON payload that contains a PowerShell command. PowerShell is launched in an invisible window and downloads start.bat from the same attacker infrastructure.

start.bat performs privilege escalation, downloads the final Rust binary HiddenLayer calls sefirah, adds the binary to Microsoft Defender's exclusion list, and executes it. The Rust infostealer then runs anti-analysis checks for virtual machines, sandboxes, debuggers, and known analysis tools before proceeding. If the environment looks real, sefirah harvests cookies, saved passwords, encryption keys, browsing data, and session tokens from Chromium- and Gecko-based browsers, plus cryptocurrency wallets and seed phrases. Stolen data is compressed and exfiltrated to recargapopular[.]com over the network. The compromise window from initial loader.py execution to first exfiltration can be measured in minutes.

Why the Hugging Face Lure Platform Matters

Hugging Face is the dominant distribution platform for open-source AI models and datasets. Researchers, ML engineers, and AI builders pull models from it the way Python developers pull packages from PyPI or JavaScript developers pull packages from npm. The economics that make those ecosystems attractive to attackers, low cost to publish, high implicit trust from a name match, and easy reach to a developer population whose installed code runs with their machine's privileges, apply to Hugging Face equally. What has been missing until now is publicly documented attacker tradecraft at scale. Boxter changes that. The campaign demonstrates that the typosquat-and-trojanize pattern works on Hugging Face, that ranking and download metrics can be gamed to amplify reach, and that an AI-platform-specific lure (a verbatim model card, model name confusion, a plausible loader script) lands with the target audience.

This sits inside a broader pattern of AI-platform abuse The CyberSignal has been tracking. The WIRED RedAccess vibe-coded-apps investigation documented hundreds of thousands of AI-built apps with insecure-by-default access controls; The CyberSignal's broader application-security coverage tracks how each AI-adjacent platform, from code generators to model repositories, is becoming an attack surface in its own right. Boxter is the latest, sharpest example: a fully-cloned AI model release used as a delivery vehicle for a credential-and-crypto-stealing infostealer.

What Boxter Steals and Why Recovery Is Hard

The infostealer's target list is broad and operationally significant. From Chromium- and Gecko-based browsers, sefirah harvests cookies, saved passwords, encryption keys, browsing data, and session tokens. Session tokens are particularly damaging: they let an attacker resume an authenticated session without needing the password, bypassing password rotation as a remediation step. From the local filesystem, the malware harvests cryptocurrency wallets and seed phrases, including from desktop wallet applications and browser extensions for major chains. A successful Boxter infection on a developer or researcher workstation can yield credentials for the developer's GitHub, Hugging Face, cloud provider, password manager, email, and any crypto holdings the user has on the machine.

That target profile is why HiddenLayer's recommended remediation is unusually demanding. Users who downloaded files from the malicious repository are advised to reimage the affected machine, rotate all stored credentials, replace cryptocurrency wallets and seed phrases, and invalidate browser sessions and tokens. Each step is non-trivial. Reimaging requires backup-recovery rehearsal that many individual researchers and small teams have not done. Credential rotation has to span every service touched by the affected machine, which is typically more services than a user remembers. Cryptocurrency wallet replacement means generating new keypairs and moving funds, with the irreversibility risks that implies. Session invalidation has to cover the long tail of services that may not expose a session-revocation control.

Defender Actions for the Next 30 Days

- Audit and inventory Hugging Face usage in your organization. Identify which staff, business units, or product teams pull models or datasets from Hugging Face. Many enterprises do not centrally track this. The audit feeds the next four actions; without it, you cannot scope the exposure or define a sensible policy.

- Establish a verified-publisher allow-list for production-critical AI workloads. Pin specific verified Hugging Face accounts (openai/, meta-llama/, microsoft/, google/, anthropic/, and so on) for production models. Anything outside the allow-list requires security review before download. Cross-reference any model download against the publisher's official announcement on their company blog or GitHub release, treat a mismatch as a red flag.

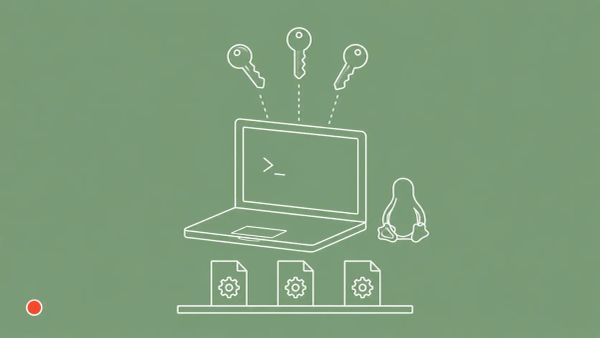

- Run loader scripts in sandboxed environments on first inspection. AI/ML code distributed alongside model weights is the attack surface. Use containers, isolated VMs, or air-gapped research workstations for first-run inspection of any loader.py, install script, or setup utility. Only execute in production environments after security review confirms the script does not disable SSL verification, decode base64 URLs to external resources, or invoke PowerShell.

- Hunt for Boxter-specific indicators on developer and research endpoints. Outbound connections to recargapopular[.]com from any internal asset are high-fidelity. PowerShell processes spawned in invisible windows from a Python interpreter parent process are anomalous and worth alerting. Rust-binary processes resembling sefirah added to Microsoft Defender exclusion lists outside change-control are a red flag. Concurrent reads of Chrome, Edge, Firefox, and crypto-wallet directory paths from a single process indicate active credential harvesting.

- Brief AI/ML and data science teams on the typosquat pattern. Verify the publisher account before downloading any model. Check the model card against the publisher's official announcement. Inspect any loader.py or setup script for SSL bypass, base64-encoded URL fetches, and PowerShell or shell-out calls. Treat repository download counts and likes as marketing signal, not security signal, the platform metrics can be gamed.

The CyberSignal Analysis

Signal 01 — AI model repositories are now part of the developer supply-chain threat surface

For roughly five years, application-security teams have absorbed the lesson that npm, PyPI, RubyGems, and Maven Central are contested terrain where typosquats and malicious packages are an ongoing operational concern. Hugging Face has been treated, by most enterprise security programs, as a research artifact distribution platform rather than as a software-supply-chain risk equivalent to npm. Boxter ends that exception. The same offensive economics that drive typosquatting on traditional package registries apply to AI model repositories. The implications for AppSec teams are concrete: extend your supply-chain controls (allow-listing, provenance verification, sandboxed first-run, post-deployment monitoring) to AI/ML platforms, not as an experimental project but as a baseline 2026 program. Organizations that wait for an AI-platform-specific incident to land internally before doing this will be doing the work under incident-response pressure rather than during planned adoption.

Signal 02 — Cross-platform infrastructure overlap signals professional, sustained tradecraft

HiddenLayer's discovery that the Boxter loader infrastructure overlaps with an npm typosquatting campaign distributing the WinOS 4.0 implant is the more strategically significant finding than the headline reach. A one-off opportunistic typosquat is a noisy threat with limited adversary cost. A cross-platform operation with shared infrastructure across Hugging Face and npm, multiple repositories on each, and a coherent payload-delivery architecture is professional tradecraft. That kind of operator iterates: the repositories that get taken down get replaced; the lures that work get refined; the platforms that improve detection get traded for platforms that have not. Defender posture should reflect that. Treat Boxter as the first publicly visible symptom of an ongoing campaign, not a single closed incident, and instrument accordingly: monitor outbound connections to known C2 infrastructure across both AI and developer platforms, share IOCs with AI-platform vendors, and brief AI-builder communities on the typosquat pattern.

Signal 03 — Recovery from infostealer-class incidents requires identity reconstruction, not patch-and-rescan

HiddenLayer's recommended remediation, full reimage, full credential rotation, crypto wallet replacement, and session invalidation, is the appropriate response to a successful infostealer infection but exceeds what most organizations' incident-response runbooks actually prescribe for a developer-endpoint compromise. Many IR playbooks still treat infostealer hits as a malware-removal exercise plus password reset, on the implicit assumption that the affected user's credentials can be rotated cleanly and the rest of the environment is unaffected. Boxter's target list, browser session tokens, encryption keys, password manager artifacts, crypto wallets, makes that assumption empirically wrong. CISOs should update IR plans to reflect that infostealer-class incidents now require complete identity reconstruction for the affected user, including session-token invalidation across every authenticated service, replacement of any cryptocurrency held on the device, and full machine reimage rather than cleanup. The cost of doing this is real but bounded; the cost of not doing it is that the attacker retains access via stolen session tokens long after the malware has been removed.