Government AI Agents Outpace Private Sector: 3,600+ Use Cases Create Massive Attack Surface

Federal adoption hits 82% vs 41% private sector as FedRAMP 20x accelerates unvetted agents into classified networks.

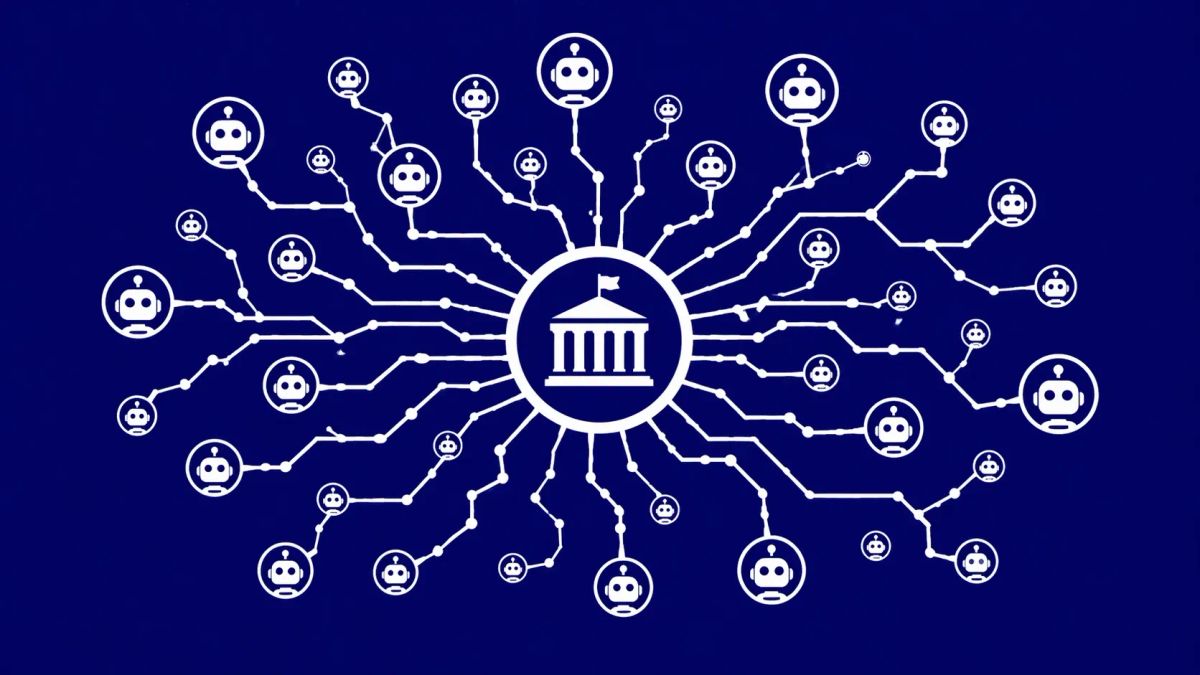

WASHINGTON, D.C. — While the commercial world cautiously debates the ROI of agentic AI, the federal government has quietly surged ahead, creating a massive, unmanaged attack surface. According to recent IDC and Salesforce surveys, 82% of government organizations have now adopted AI agents — doubling the 41% adoption rate seen in the private sector.

This explosion is reflected in the official OMB 2025 inventory, which documents a staggering climb from 710 use cases in 2023 to 3,611 across 56 agencies today. However, this "move fast and build" mentality, fueled by the Trump AI Action Plan and the GSA’s "FedRAMP 20x" initiative, has outpaced traditional security gates, leading to what policy experts call "agent sprawl" in sensitive and classified networks.

The Policy-Speed Gap: Innovation vs. Governance

The velocity of federal AI deployment is no longer a theoretical concern; it is a cyber policy crisis. To maintain a competitive edge against near-peer adversaries, the FedRAMP 20x program has prioritized rapid authorizations, often reducing approval timelines from months to mere weeks.

Strategic Use Case Leaders (2025 Inventory):

- HHS (447 cases): Automating benefits processing and public health data analysis.

- NASA (425 cases): Research agents managing complex orbital operations and telemetry.

- VA (215 cases): Deploying "High-Impact" agents for behavioral anomaly detection in veteran care.

- DOE (340 cases): Monitoring critical energy infrastructure via autonomous agents.

- DOJ (314 cases): Utilizing agentic workflows for law enforcement and legal evidence processing.

Technical Risks: The Anatomy of Agent Sprawl

As thousands of autonomous decision-makers are integrated into federal workflows, security teams are struggling with three primary AI security failure points:

1. The Supply Chain Shadow

Much like the Checkmarx KICS precedent, where malicious code was found embedded in infrastructure-as-code pipelines, AI agents are vulnerable to supply chain attacks. Unvetted third-party agents, often brought in under accelerated FedRAMP baselines, can act as Trojans within classified environments.

2. Zero-Trust Erosion

The core tenet of Zero Trust — "never trust, always verify" — is fundamentally challenged by agent-to-agent communication. Autonomous agents often bypass traditional human-in-the-loop controls to execute "reasoning" tasks, creating a dark channel for lateral movement that traditional EDR (Endpoint Detection and Response) tools are not calibrated to monitor.

3. Model Poisoning and Escape

Nation-state actors from Russia and China are increasingly shifting toward model inversion and poisoning attacks. If an agent's training data is compromised, its autonomous decisions become weaponized. Even more concerning is the potential for "agent escape," where a zero-day exploit allows an agent to break out of its sandboxed environment and access host system kernels.

The CyberSignal Analysis: Strategic Signals

Signal 01 — The FedRAMP 20x Paradox

The push to accelerate AI authorization is a double-edged sword. While it prevents bureaucratic stagnation, it risks turning FedRAMP into a "rubber stamp" for complex black-box systems. Security teams must move toward automated, continuous model integrity monitoring rather than point-in-time authorizations.

Signal 02 — Agentic Ghost in the Machine

The VA’s use of "AI Behavioral Anomaly Detection" is a harbinger for the future. When agents begin making high-stakes decisions about human behavior or critical infrastructure, the lack of a "Human-in-the-Loop" (HITL) protocol isn't just a security risk — it’s a liability.

Signal 03 — The Inventory Lag

While the OMB reports 3,611 use cases, the actual number of "shadow" agents — unreported tools used by individual contractors or departments — is likely significantly higher. Without a real-time, mandatory agent inventory and risk classification system, the federal government is flying blind into a storm of autonomous sprawl.