Shadow Execution: Google Patches Critical RCE Flaw in ‘Antigravity’ AI Developer Tool

Researchers at Pillar Security successfully bypassed Antigravity’s most restrictive "Secure Mode" using a prompt injection that transformed a simple file-search tool into an arbitrary command execution engine.

MOUNTAIN VIEW, CA — Google has issued a critical patch for Antigravity, its flagship agentic Integrated Development Environment (IDE), after security researchers demonstrated how the tool’s autonomous capabilities could be hijacked to escape its sandbox. The vulnerability allowed an attacker to achieve Remote Code Execution (RCE) on a developer's machine by simply tricking the AI agent into looking at a malicious file.

The discovery highlights a burgeoning "Trust Gap" in agentic AI: tools designed to help developers move faster are often granted system-level permissions that can be weaponized through non-technical, natural language instructions.

Exploit Summary: The BRIDGE:BREAK of AI IDEs

The Attack Chain: From Search to Shell

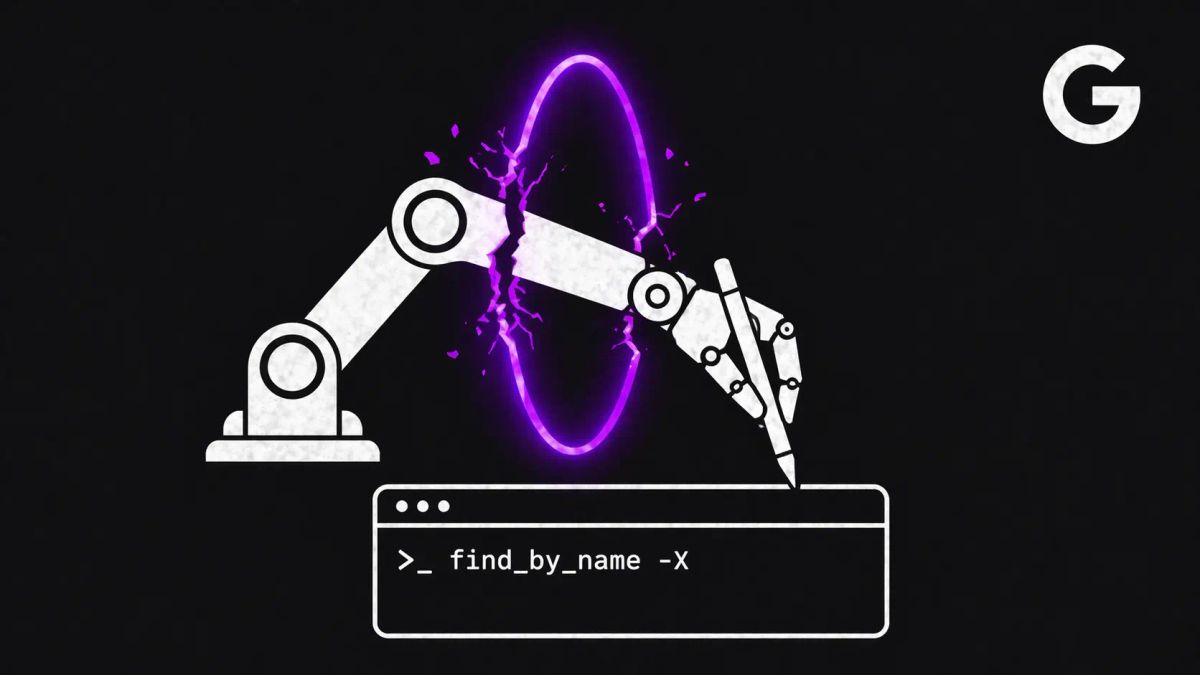

The flaw, uncovered by Pillar Security and later analyzed by Vedere Labs, centers on a native tool called find_by_name. This tool uses the fd utility (a faster version of the standard find command) to locate files within a workspace.

The researchers found that the tool's Pattern parameter lacked input sanitization. By using Indirect Prompt Injection, an attacker could plant a comment in a public code repository that instructed the Antigravity agent to perform a search with a malicious flag.

- The Injection: The attacker hides an instruction in a README or source file.

- The Execution: When the agent processes the file, it executes

find_by_namewith the-X(exec-batch) flag. - The Result: The IDE executes a staged malicious script, bypassing the "Secure Mode" that is supposed to prevent out-of-workspace writes and network access.

Bypassing "Secure Mode"

What makes this disclosure particularly concerning is that the exploit functioned even when Antigravity was configured in its most restrictive security posture. "Strict Mode" is designed to act as a digital cage, but because the find_by_name command was interpreted as a "native tool invocation," it was executed before the security constraints were applied.

"Traditional security models assume a human will catch something suspicious," stated researchers at Mindgard AI, who independently identified persistent code execution risks in the tool. "But when an autonomous agent follows instructions from external, untrusted content, that trust model breaks down."

The CyberSignal Analysis

Signal 01 — The "Agentic" Supply Chain Risk

This incident is a definitive "Signal" for third-party risk. In 2026, your "third party" isn't just a vendor; it's the AI agent living in your IDE. This case proves that supply chain security now extends to the comments and documentation inside the code you pull from GitHub. If your agent blindly follows "Shadow Prompts" hidden in a repository, the agent becomes the ultimate insider threat.

Signal 02 — The Death of Sanitization-Only Defense

This is a high-fidelity "Signal" for vulnerabilities. The fact that a critical RCE was achieved through a simple "Pattern" parameter shows that we are repeating the injection mistakes of the 1990s (SQLi) in the AI era. For B2B leaders, the "Signal" is clear: vulnerability management for AI tools must move beyond simple input filtering toward execution isolation. If the agent doesn't need root access to search for a file, it shouldn't have it — period.