A 404 Media Reporter Watched Someone Else Become Him, Live, on Microsoft Teams

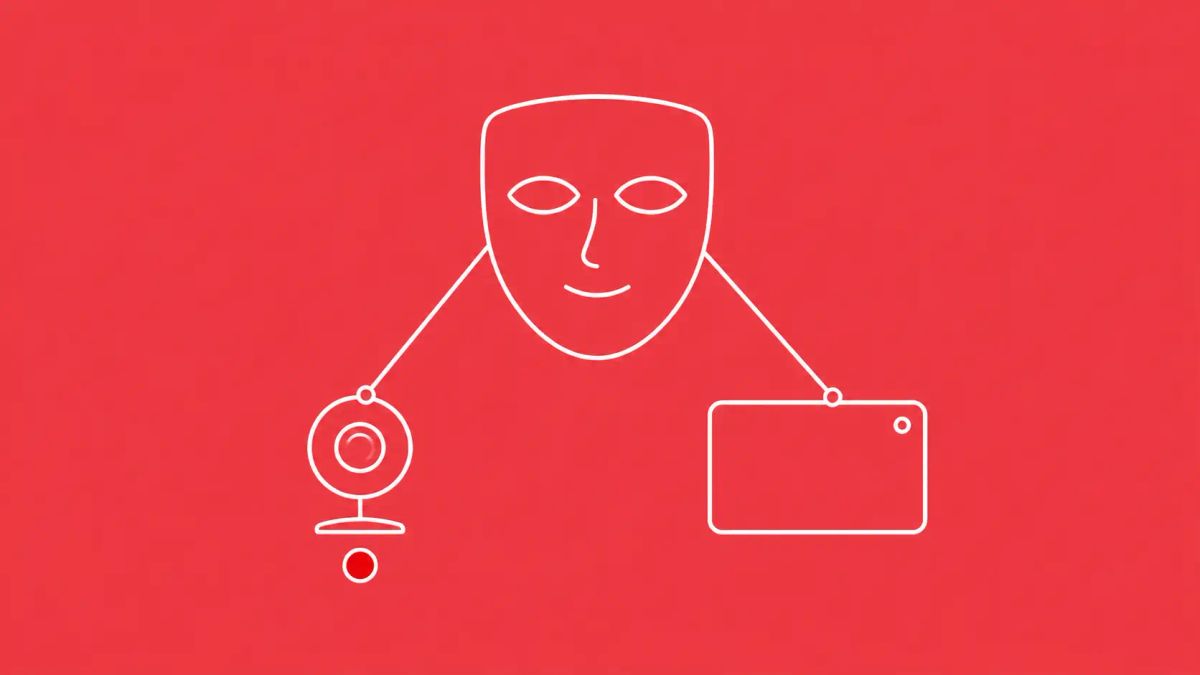

404 Media's Joseph Cox published an investigation on May 7, 2026 in which he obtained, installed, and personally tested Haotian AI — a Chinese realtime deepfake software marketed to scammers — and watched a Cambodia-based operator's face shapeshift into his own during a live Microsoft Teams call. Haotian AI works on WhatsApp, Zoom, Microsoft Teams, TikTok, Instagram, and YouTube; runs on a moderately high-end gaming PC; has earned its creators more than $4 million; and beats the leading academic deepfake detector by misclassifying nearly 100 percent of its outputs as authentic. Romance scams, virtual kidnappings, tax fraud, and business email compromise can all be amplified by this category of tool. Video calls are no longer reliable identity verification.

On May 7, 2026, 404 Media published "HELLO BOSS: Inside the Chinese Realtime Deepfake Software Powering Scams Around the World" by investigative reporter Joseph Cox. After weeks of negotiations with Chinese-language scammers selling the software, Cox obtained a copy of Haotian AI and tested it himself in a live Microsoft Teams call. He watched the operator's face become his own face in real time, with subtle gestures — cheek-pinching, nose-covering, chin-stroking — preserved without breaking the illusion. According to 404 Media's reporting, this is the first journalistic test of a software class that has previously been visible only through its outputs. Haotian AI works specifically with WhatsApp, Microsoft Teams, Zoom, TikTok, Instagram, and YouTube — covering essentially the full surface area of consumer and business video communication.

The single most consequential element is the shift in the deepfake threat model that the reporting documents. Earlier deepfakes were post-production artifacts: someone's face superimposed on existing video, useful for misinformation, harassment, and asynchronous fraud but limited by the absence of liveness. Haotian AI is interactive. It shapeshifts the operator's face during a live call, in response to whatever the conversation calls for, and the technology is mature enough that subtle gestures track correctly. Cox's reporting also surfaces operational context that defenders should internalize: Haotian AI is based out of Cambodia per the cybercrime-fighting NGO Chong Lua Dao, with physical install service offered in Phnom Penh; it has earned its creators more than $4 million; it is likely built on open-source face-swap tools (the value being technical support); and the academic deepfake detector Xception — which hits 89.1 percent accuracy on control samples — misclassified nearly 100 percent of Haotian-generated samples as authentic. The defender takeaway is unambiguous and broadly applicable: any control framework that treats "we hopped on a video call to verify" as a trust anchor needs to be redesigned.

| Haotian AI Profile | |

|---|---|

| Detail | Information |

| Software | Haotian AI — realtime deepfake face-swap tool marketed to scammers |

| Investigation | 404 Media (Joseph Cox), May 7, 2026 — first journalistic operational test of this software class |

| Supported platforms | WhatsApp, Microsoft Teams, Zoom, TikTok, Instagram, YouTube |

| Hardware requirement | Moderate to high gaming PC: i7 processor, 16GB DDR5 RAM, Nvidia 4080 SUPER GPU per the seller's specification table |

| Operational base | Cambodia per cybercrime-fighting NGO Chong Lua Dao; physical installation service offered in Phnom Penh; linked to Chinese money-laundering networks and Southeast Asia scam compounds |

| Revenue | More than $4 million earned by Haotian AI's creators per 404 Media's reporting |

| Technical foundation | Likely built on open-source face-swap tools per 404 Media; the commercial value is sophisticated technical support, not novel ML |

| User interface controls | Adjustable nose size; "acne removal" feature; eye-shape modification; some configuration may be required for an effective deepfake |

| Detection failure | Per a June 2025 paper from Charles Fross and colleagues: Xception deepfake detector hit 89.1% accuracy on control samples but misclassified nearly 100% of Haotian samples as "authentic" |

| Documented impersonation | Demonstrated impersonating at least one U.S. police department per 404 Media's investigation |

| Threat applications | Romance scams, virtual kidnappings, tax fraud, business email compromise (BEC), executive impersonation, recruiting fraud, vendor onboarding fraud |

| NGO source | Hieu Minh Ngo (former hacker prosecuted by U.S. for identity theft, now combats fraud with Chong Lua Dao) shared screenshots showing Haotian AI offering physical installation "in some areas of Cambodia," plus a video appearing to show customer support staff installing the software in a Phnom Penh office building |

What Cox Saw on the Test Call

The operational test is the part of the 404 Media reporting that defenders should anchor on. Cox describes joining a Microsoft Teams call where the other participant was running Haotian AI to impersonate him — that is, the operator's face was being shapeshifted into Cox's face in real time on the video feed Cox saw. The deepfake mirror moved when Cox moved. It pinched its cheek when Cox pinched his cheek. It covered its nose when Cox covered his nose. It stroked its chin when Cox stroked his chin. None of those movements broke the illusion. The realtime shapeshifting is the technical capability that earlier deepfake tooling did not have at this fidelity. Cox's framing is direct: "Whereas video deepfakes used to be about superimposing someone's face onto an existing video, the tool I was using promised something else: the ability to shapeshift into someone — anyone — live during a video call."

The technical underpinnings, per 404 Media's analysis, are less impressive than the user-facing experience. Haotian AI is likely built on open-source face-swap tools that have been publicly available for years. The commercial value the Chinese-language sellers provide is technical support — installation, configuration, the user interface that lets customers adjust their deepfake's nose size or eye shape, and operational guidance for using the tool against specific platforms. This matters because it tells defenders something important about the threat curve. The capability is not gated by access to proprietary models or expensive infrastructure; it is gated by user-experience polish and operational support, both of which are commodity goods. As soon as one realtime deepfake tool is commercially available, others will follow. Haotian AI is not the unique outlier; it is the first one a journalist managed to test.

Detection Tools Are Already Behind

The most concerning empirical detail in the 404 Media reporting is the academic detection result. Xception is one of the leading published deepfake detection models. In a paper published last June, researchers Charles Fross and colleagues benchmarked Xception's performance against Haotian AI's outputs. Xception hit 89.1 percent accuracy on control samples — non-Haotian deepfakes plus authentic videos — which is in line with its published performance. Against Haotian samples specifically, Xception misclassified nearly 100 percent as "authentic." Fross told 404 Media: "Haotian's work is shockingly convincing, especially with how it handles facial and body movements." The implication for organizations evaluating commercial deepfake-detection products: most of them are built on the same underlying model architectures as Xception, and most are trained on similar data distributions. There is no reason to believe commercial detection products are doing meaningfully better than Xception against this specific tool, and 404 Media's reporting is the first publicly-evaluated case study against a current realtime deepfake.CyberSignal's social engineering coverage tracks the broader pattern of identity-verification controls being eroded by AI-generated content.

The defender response cannot rest on detection alone. Organizations whose fraud-defense or executive-impersonation playbooks include "if it looks suspicious, hop on a video call to verify" are operating on outdated assumptions. The video call is no longer the corroborating signal it was even 12 months ago. Multi-channel verification — combining video with phone callback to a known number, with a Slack/Teams message from the requestor's verified account, with email from the requestor's verified domain, and for very large transactions with in-person or notarized authorization — is the new floor for high-stakes identity-trust decisions.

The Cambodia and Scam-Compound Connection

404 Media's reporting connects Haotian AI to the broader Southeast Asian scam-compound ecosystem and Chinese money-laundering networks. Hieu Minh Ngo — a former hacker who was prosecuted by the U.S. for identity theft and now works to combat fraud with the Vietnamese cybercrime NGO Chong Lua Dao — provided 404 Media with screenshots showing Haotian AI advertising a physical installation service "in some areas of Cambodia," and a video appearing to show Haotian AI customer support staff installing the software inside a Phnom Penh office building. The scam-compound infrastructure of Cambodia, Myanmar, and Laos has been documented extensively by the United Nations Office on Drugs and Crime and by investigative outlets including 60 Minutes, Reuters, and ProPublica — these are large-scale operations where trafficked workers conduct pig-butchering, romance, investment, and identity-fraud scams from compound facilities. Haotian AI is one tool in that operational stack; the realtime deepfake capability adds video-call impersonation to the existing toolkit of fake personas, fake apps, and fake exchanges.

The U.S. police-department impersonation 404 Media documented is the specific demonstrator that should give defenders pause. Haotian AI's marketing materials show the tool being used to impersonate at least one U.S. police department — a use case that supports virtual-kidnapping scams, tax-fraud impersonation calls, and the broader category of authority-impersonation fraud. Tax-season impersonation calls ("This is the IRS — you owe back taxes — provide payment information now or warrants will be issued") are a long-running and lucrative scam category; adding a realtime deepfaked badge-wearing officer to the call shifts the credibility calculus for victims who have been trained to ask for visual confirmation. The threat surface is not theoretical.

Defender Actions for the Next 30 Days

- Brief executive teams that video calls are no longer reliable identity verification. This is the single most important governance message from the 404 Media reporting. Update wire-transfer authorization, executive-impersonation defense, and any procedure that uses "I jumped on a video call to confirm" as a trust anchor. Document the change; train against it; test against it.

- Implement multi-channel verification for high-value transactions. Any wire transfer above your established threshold requires verification across at least two independent channels — not just video. Add a callback to a known phone number, a Teams or Slack message from the requestor's verified account on a corporate-managed device, and for very large transfers in-person or notarized authorization. Make this a hard policy, not a recommendation.

- Update HR and recruiting onboarding controls. The North Korean IT-worker-scheme threat surface gets substantially worse when the candidate can deepfake themselves to match a stolen identity's photos. Consider in-person final-round interviews for any role with privileged-system access. For fully remote hires, add periodic video-and-document re-verification. Make ID-verification a multi-step process that includes live document inspection, behavioral cues that are difficult to fake on camera, and references checked through independent channels.

- Add a deepfake-fraud category to your incident response playbook. "Suspected deepfake-enabled fraud" should have its own response: preserve video call recordings, capture metadata (IP addresses, account identifiers, platform-side telemetry), preserve email and chat context surrounding the call, and engage law enforcement early. This is a fast-moving evidence category — call recordings auto-delete on most platforms within 30 to 90 days.

- For organizations evaluating commercial deepfake-detection products: ask vendors specifically how their products perform against current realtime deepfake tools, and request evidence rather than marketing claims. Xception's near-zero detection rate against Haotian samples is a useful benchmark. Vendors whose products do not meaningfully outperform Xception on current realtime tools are not worth deploying for the executive-impersonation use case.

The CyberSignal Analysis

Signal 01 — Video calls are no longer identity verification

This is the single most consequential governance message of the 404 Media reporting and the one CISOs should communicate to their boards this quarter. The historical assumption that video calls provide a corroborating identity signal — distinct from voice, distinct from email, distinct from chat — is collapsing. Realtime deepfake tools that work on the major business and consumer video platforms are commercially available, run on hardware that fits in a gaming-PC budget, generate output that academic detectors cannot reliably distinguish from authentic, and have an established commercial ecosystem with a Cambodia-based operations footprint. Any control framework that uses "we got on a video call to verify" as a trust anchor is operating on outdated assumptions. The replacement is not a single new control; it is a multi-channel verification posture where video is one input among several, and the high-stakes decisions require independent corroboration through phone callback, verified-account messaging, and (for very large transactions) in-person or notarized authorization.

Signal 02 — The threat curve is gated by operational support, not model capability

404 Media's assessment that Haotian AI is likely built on open-source face-swap tools is the most strategically important detail in the entire investigation. The commercial value Haotian AI provides its scammer customers is operational support: installation, configuration, the polished user interface, the platform-specific guidance for WhatsApp and Teams and TikTok, the Cambodia-based physical-install service. The underlying machine learning capability is not gated by access to proprietary models. This means the threat curve is not bottlenecked by AI lab releases; it is bottlenecked by who is willing to build and support the operational packaging. Defenders should expect rapid proliferation of competing realtime deepfake products through 2026 and 2027 — some Chinese-operated like Haotian, some Russian-operated, some operated from anywhere with criminal infrastructure tolerance. The capability set will widen and the price will fall. Any defensive posture built on "the technology isn't quite good enough yet" is operating on a clock that has already run out.

Signal 03 — Detection-product claims need independent benchmarking before deployment

The Charles Fross paper showing Xception's near-total failure on Haotian AI samples is a useful empirical benchmark for evaluating commercial deepfake-detection vendors. Most enterprise detection products derive from research-architecture lineages similar to Xception's, and most are trained on benchmark datasets that predate current realtime tools. Vendor marketing materials emphasizing 95-percent-plus detection accuracy on benchmark datasets do not necessarily translate to performance against operationally current tools like Haotian. CISOs and procurement teams evaluating these products should request specifically: what realtime deepfake tools were included in the vendor's most recent benchmark, what the product's true-positive rate was against those tools (not against archival deepfake samples), and what the product's update cadence is for incorporating new tools as they emerge. The honest answer for many vendors will be "we don't yet have a benchmark against the current commercial Chinese tools" — that itself is informative. Defensive deployment without that information is a confidence purchase, not a security purchase.

Sources

| Type | Source |

|---|---|

| Primary | 404 Media (Joseph Cox): HELLO BOSS — Inside the Chinese Realtime Deepfake Software Powering Scams Around the World |

| Source attribution | Hieu Minh Ngo via Chong Lua Dao (Vietnamese cybercrime-fighting NGO) — Cambodia operational base, Phnom Penh installation service evidence |

| Detection benchmark | Charles Fross et al. (June 2025 paper) — Xception 89.1% on controls vs. ~100% misclassification of Haotian samples as authentic |

| Context | AIAAIC Repository: Xinhua Deepfake News Anchors (historical Chinese deepfake context) |